You can specify this option for performance, s3fs memorizes in stat cache that the object (file or directory) does not exist.

It increases ListBucket request and makes performance bad. s3fs always has to check whether file(or sub directory) exists under object(path) when s3fs does some command, since s3fs has recognized a directory which does not exist and has files or subdirectories under itself. o enable_noobj_cache (default is disable)Įnable cache entries for the object which does not exist. The value "30" in my system was selected somewhat arbitrarily. Without this option, you'll make fewer requests to S3, but you also will not always reliably discover changes made to objects if external processes or other instances of s3fs are also modifying the objects in the bucket. Specify expire time(seconds) for entries in the stat cache o stat_cache_expire (default is no expire) You can disable this behavior in ProFTPd, but I would suggest that the most correct configuration is to configure additional options -o enable_noobj_cache -o stat_cache_expire=30 in s3fs: ftpaccess is checked to see if the user should be allowed to view it. ftpaccess files over, and over, and over again, and for each file in the directory. Once you start to get a few tens or hundreds of files, the problem will manifest itself when you pull a directory listing, because ProFTPd will attempt to read the. There is a problem with the default configuration, however. so, with some hesitation (due to the impedance mismatch between S3 and an actual filesystem) but lacking the time to write a proper FTP/S3 gateway server software package (which I still intend to do one of these days), I proposed and deployed this solution for them several months ago and they have not reported any problems with the system.Īs a bonus, since proftpd can chroot each user into their own home directory and "pretend" (as far as the user can tell) that files owned by the proftpd user are actually owned by the logged in user, this segregates each ftp user into a "subdirectory" of the bucket, and makes the other users' files inaccessible. I have a client that serves content out of S3, and the content is provided to them by a 3rd party who only supports ftp pushes. then mount a bucket into the filesystem where the ftp server is configured to chroot, using s3fs. You can install an FTP/SFTP service (such as proftpd) on a linux server, either in EC2 or in your own data center. There are theoretical and practical reasons why this isn't a perfect solution, but it does work. S3 now offers a fully-managed SFTP Gateway Service for S3 that integrates with IAM and can be administered using aws-cli.

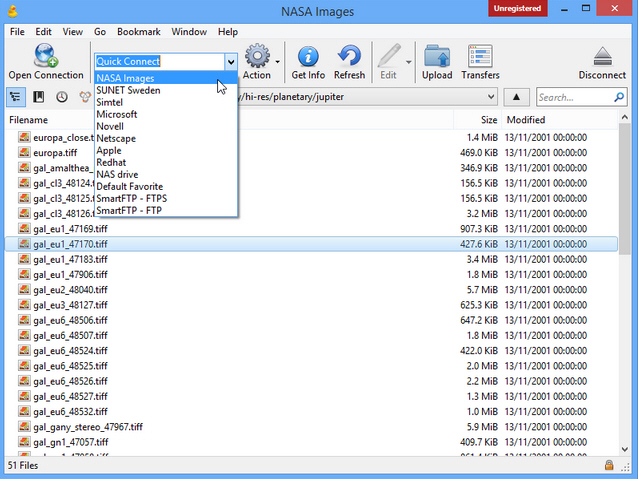

NET/ PowerShell interface, if you need to automate the transfers. Or use any free "FTP/SFTP client", that's also an "S3 client", and you do not have setup anything on server-side. Amazon EC2) and use the server's built-in SFTP server to access the bucket.Īdd your security credentials in a form access-key-id:secret-access-key to /etc/passwd-s3fsĪdd a bucket mounting entry to fstab: /mnt/ fuse.s3fs rw,nosuid,nodev,allow_other 0 0 Just mount the bucket using s3fs file system (or similar) to a Linux server (e.g. The role must have a trust relationship to .įor details, see my guide Setting up an SFTP access to Amazon S3. Permissions of users are governed by an associated AWS role in IAM service (for a quick start, you can use AmazonS3FullAccess policy). In SFTP server page, add a new SFTP user (or users). In your Amazon AWS Console, go to AWS Transfer for SFTP and create a new server. Or you can just use a (GUI) client that natively supports S3 protocol (what is free).Or you can mount the bucket to a file system on a Linux server and access the files using the SFTP as any other files on the server (which gives you greater control).You can use a native Amazon Managed SFTP service (aka AWS Transfer for SFTP), which is easier to set up.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed